Neuromorphic Edge AI: Why the Future of Intelligence Will Mimic the Brain, Not the Computer

Neuromorphic Edge AI: Why the Future of Intelligence Will Mimic the Brain, Not the Computer

Artificial intelligence has spent the last several years scaling through brute force. Bigger models. Bigger GPUs. Bigger power bills. Bigger infrastructure footprints. That approach has its place, but at the edge, it starts to break down.

Edge environments do not reward waste. They reward speed, efficiency, resilience, and local decision-making. That is why Neuromorphic Edge AI matters.

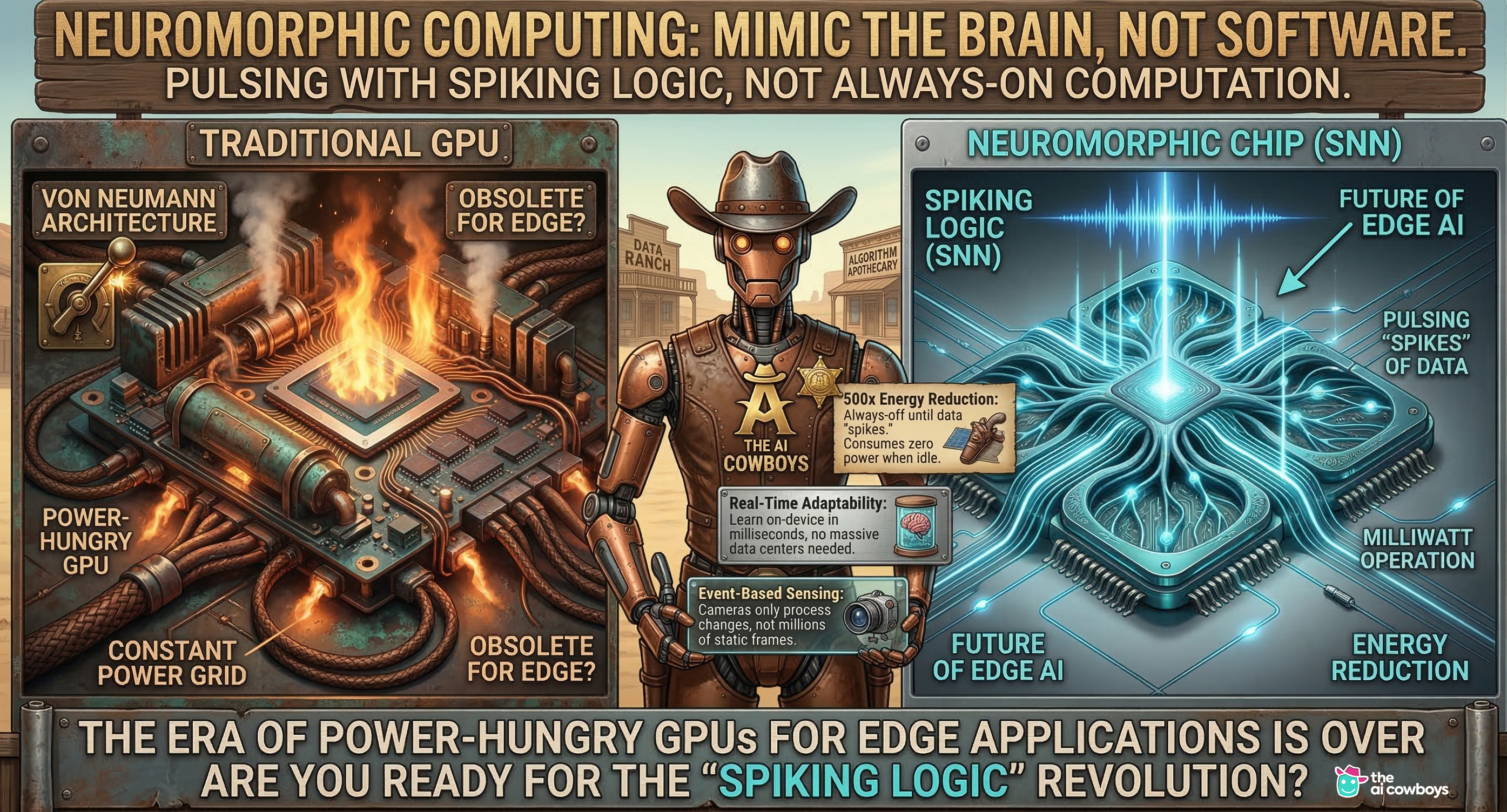

Instead of processing everything all the time, as in a traditional system, neuromorphic computing mimics the brain. It works through spiking neural networks that activate only when meaningful information appears. In other words, the system is not constantly burning energy just to sit there and look busy. It reacts when it needs to react.

For government, defense, cybersecurity, industrial monitoring, robotics, and autonomous systems, the future will not belong only to whoever has the largest model. It will belong to whoever has the most operationally effective architecture.

What Neuromorphic Edge AI actually means

Traditional computing architectures are built around constant processing. Data moves back and forth, instructions keep cycling, and power is consumed whether or not the system is handling meaningful change.

Neuromorphic Edge AI takes a different path.

It is designed around event-driven behavior, much closer to how biological neurons work. A neuromorphic system does not stay fully engaged at all times. It “fires” when something important happensThat makes it especially powerful in environments with a constant stream of sensory input, but only a small fraction of that input actually requires a response.e.

Instead of asking, “How do we cram traditional AI into smaller hardware?” the better question becomes, “How do we build AI that behaves the right way for the environment it operates in?”

Why this matters now

The edge is becoming one of the most important battlegrounds in AI deployment.

Organizations want systems that can operate in real time, on constrained power, without depending on constant cloud access or oversized infrastructure. They want AI that can process anomalies, interpret sensor events, detect threats, and respond locally.

Neuromorphic Edge AI offers a more efficient model for:

- real-time threat detection

- sensor-driven intelligence

- event-based vision

- robotics and autonomy

- distributed cybersecurity systems

- low-power inference in mission environments

The point is not that every AI workload should be neuromorphic. That would be techno-theater. The point is that for edge systems where responsiveness and energy discipline matter, neuromorphic design is increasingly the smarter fit.

The AI Cowboys and the move toward Neuromorphic Edge AI

At The AI Cowboys, this work has moved beyond theory and into applied development.

In the team’s public neuromorphic write-up, The AI Cowboys describe a system developed in the UT San Antonio ecosystem using an NVIDIA Jetson Orin AGX combined with a BrainChip Akida PCIe board. According to that published example, the team achieved the same continuous video anomaly detection result while reducing power consumption from 120 watts to 9 watts.

That is not a vanity metric. That is an operational shift.

When you dramatically reduce compute overhead while maintaining the mission outcome, you are not just optimizing performance. You are expanding the scope of where AI can be deployed, how long it can run, and how much infrastructure it requires to be useful in the field.

The AI Cowboys were also the first at UT San Antonio to implement a spiking neural network back in 2025, and this work supported a private government contract for RTTP, or Real Time Threat Protection. Because the RTTP engagement was private, the contract details are not publicly disclosed as in the blog example. But the implication is important: this was not being explored as a lab curiosity. It was developed to support real-world, mission-relevant objectives.

That is a meaningful distinction.

There is a big difference between talking about edge AI and building edge AI for environments where performance, speed, and efficiency actually matter.

A perspective from Michael Pendleton

For Michael Pendleton and The AI Cowboys, the future of edge intelligence is not about forcing traditional AI architectures into smaller boxes. It is about building systems that can think in real time, adapt locally, and operate efficiently in environments where speed, power discipline, and mission performance matter most.

That is exactly why Neuromorphic Edge AI is gaining attention. It represents a move toward architectures that behave less like always-on infrastructure and more like responsive, event-driven intelligence built for the field.

Case studies: where neuromorphic systems are proving value

1. The AI Cowboys: Edge anomaly detection with Jetson + Akida

The AI Cowboys’ published hybrid deployment is a strong example of Neuromorphic Edge AI in action. By pairing edge hardware with neuromorphic processing, the system reportedly maintained the same video anomaly-detection outcome while reducing power draw from 120 watts to 9 watts.

That matters for any environment where battery life, thermal load, power access, or compute efficiency shapes what can realistically be deployed.

For cybersecurity, real-time monitoring, and mission systems, lower-power inference can become a serious advantage rather than a nice-to-have feature.

2. Intel Loihi 2: event-driven adaptation

Intel’s work with Loihi 2 has helped validate the broader neuromorphic thesis: event-driven architectures can deliver meaningful gains in efficiency, speed, and adaptability for certain classes of workloads.

This is important because it shows the field is maturing beyond concept-stage storytelling. Neuromorphic computing is being taken seriously by major research and hardware organizations that understand the limitations of conventional scaling.

In other words, this is not fringe wizardry. The adults with chip fabs are in the room.

3. IBM TrueNorth: low-power neurosynaptic architecture

IBM’s TrueNorth remains one of the landmark examples in neuromorphic design. It demonstrated that large-scale neurosynaptic architectures could operate with radically different power characteristics than traditional systems.

TrueNorth did not just prove a chip concept. It helped prove a worldview: intelligence does not have to be built around constant instruction-shuffling and continuous energy-drain. There are other ways to compute, and some are better aligned with how complex, responsive systems work in nature.

Why Neuromorphic Edge AI is especially relevant for RTTP and cybersecurity

Cybersecurity is full of environments where everything looks normal until it very suddenly does not. That makes it a natural fit for event-driven intelligence. A neuromorphic system can remain low-power and selective until a meaningful deviation appears. Once that anomaly shows up, the system can respond quickly at the edge without relying on heavyweight, always-on processing.

That creates a compelling fit for Real Time Threat Protection.

Instead of continuously throwing maximum compute at every signal, Neuromorphic Edge AI enables systems to prioritize meaningful events, adapt locally, and respond with lower latency and lower energy overhead. For distributed sensors, surveillance systems, tactical environments, and security infrastructure, that architecture starts to make a lot of sense. This is especially relevant in government and defense settings, where conditions are rarely ideal and computational inefficiency is not just expensive, it is operationally limiting.

The bigger strategic takeaway

Neuromorphic Edge AI is not about replacing all GPUs. It is about recognizing that the future of intelligent systems will be heterogeneous.

Some workloads will still demand massive centralized compute. Others will demand compact, efficient, adaptive intelligence operating close to the mission. The organizations that recognize that distinction early will be better positioned to build real solutions rather than just expensive demos.

The future of AI is not only larger models in larger data centers. It is also smarter architectures at the edge. Architectures that respond instead of constantly churn. Architectures that conserve energy instead of assuming endless power. Architectures that look less like legacy compute and more like cognition.

That is why Neuromorphic Edge AI deserves serious attention now.

We are entering a phase where AI must prove it can operate beyond the lab, beyond the cloud, and beyond the comfort of oversized infrastructure. The systems that succeed in that environment will be the ones built for responsiveness, efficiency, and mission reality.

And for organizations exploring next-generation threat detection, edge inference, autonomous systems, or government-ready AI deployment, it is a conversation worth having now.

Reach Out To Us!

To learn more about Neuromorphic Edge AI, spiking neural networks, RTTP, or potential partnership opportunities with The AI Cowboys, contact Michael Pendleton and visit www.theaicowboys.com

If your organization is looking at real-time AI, low-power edge intelligence, or mission-ready systems for enterprise or government applications, this is where the conversation starts.